Executive Summary

When Finance asks whether Marketing caused the result, not whether revenue appeared alongside the spend, most measurement systems have no answer. Incrementality testing does. But only if it is connected to a decision.

Incrementality testing for CMOs gives you causal proof, not just platform correlation, so you can make budget decisions that hold up with Finance. However, no single test can explain the full portfolio. That is why the most effective measurement system connects incrementality testing to MMM: experiments establish ground truth, and MMM uses that truth to improve response curves, scenario planning, and guide capital allocation over time.

This blog explains what marketing incrementality measurement actually tells you, which experiments to run first, how to read incremental ROAS (iROAS) without a data scientist, and how to avoid turning testing into measurement theater.

It also explains what separates a measurement program that produces receipts from one that compounds intelligence: the closed loop between experiments and the model that makes the next budget decision.

Key Takeaways

A good test should change a budget decision. Otherwise, it is just reporting. (Lead with the challenge).

Incrementality testing tells you whether marketing caused lift, not whether revenue appeared alongside spend.

Geo holdouts, switchbacks, and pacing tests each answer a different capital allocation question, and the right choice depends on your channel, not your calendar.

MMM and incrementality testing should function as a single system, not two separate workflows; experiment results should update model coefficients automatically.

iROAS is the causal efficiency metric that matters for reallocation decisions.

In 2026, AI-driven bidding platforms can contaminate geo experiments silently; a clean test design requires detecting this before it corrupts your results.

What is incrementality testing?

Incrementality testing is a method used to measure whether marketing caused a result that would not have happened otherwise. It helps separate true lift from conversions that would have occurred anyway. In simple terms, it shows the net-new impact created by your campaign.

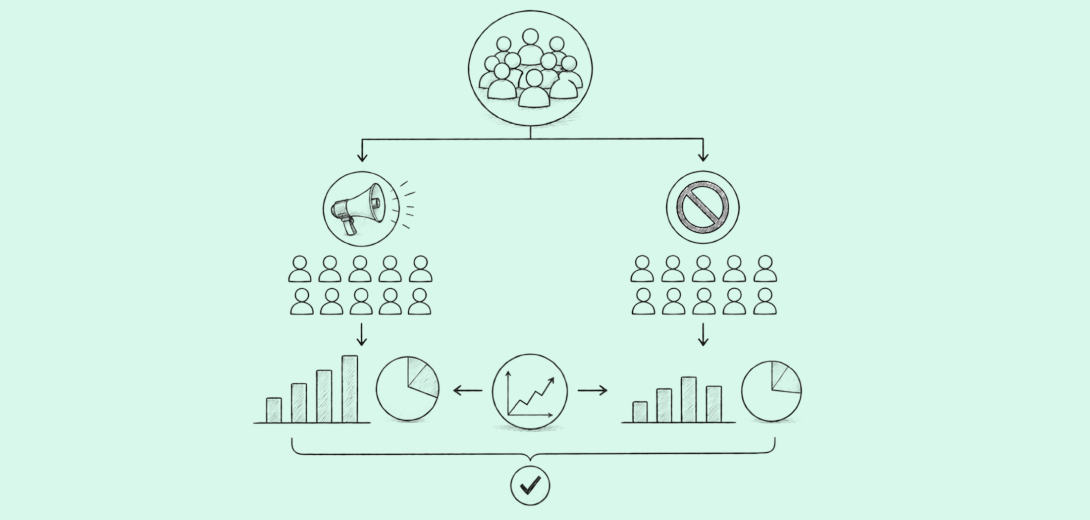

How does incrementality testing work? It works by comparing a group exposed to the campaign with a control group that was not exposed. The difference in outcomes between the two groups is the campaign’s incremental lift.

The Budget Room Problem

The hardest question in the budget room is usually the simplest one: Did that campaign actually cause the result? Platform ROAS cannot answer it. Attribution cannot either. And when Finance asks whether growth was created or merely captured, you need something stronger than a dashboard to justify it.

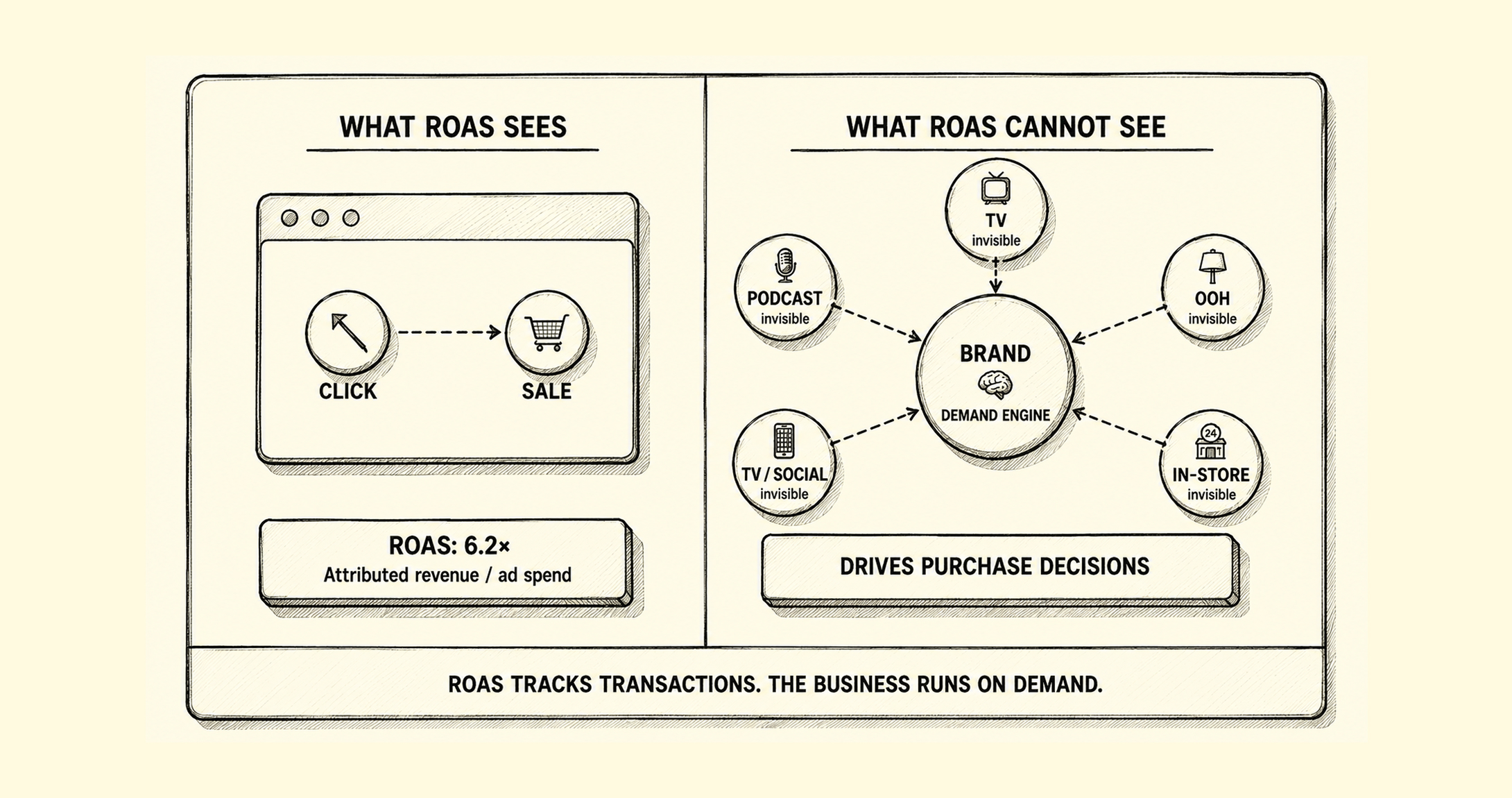

Most marketers rely on ROAS, but ROAS is misleading because it measures correlation, not causation. This is exactly the problem incrementality testing solves.

Incrementality testing for CMOs measures whether marketing caused an outcome that would not have happened otherwise. In practice, it makes incrementality testing a governance tool, not just a measurement exercise.

In IAB’s 2026 research, 67%-76% of buy-side decision-makers said they use atleast one of the advanced measurement approaches: incrementality tests, attribution analysis, and marketing mix models (MMM), yet only 39% said they use all three together, meaning most organizations still have fragmented truth, not a unified measurement system to guide decisions.

“CMOs that can move from causal proof → capital decision will be the ones that keep budget authority when scrutiny rises”

What Incrementality Testing Actually Measures (And What It Doesn’t)

What is incrementality testing? It measures causal lift. It answers the only question that really matters: would this sale, signup, lead, or visit have happened without the marketing campaign? If the answer is yes, the channel did not create lift. If the answer is no, the channel generated incremental value.

Incrementality testing measures the causal lift of a marketing intervention: the additional outcomes that would not have occurred without it. It uses controlled experiments, typically geo holdouts or switchback tests, to isolate true cause from coincident demand.

The logic is simple for any executive room. One group is exposed to the campaign, another is not, and the gap between the two is the lift. That is the essence of a marketing incrementality test. Some teams call it incremental testing, but the principle is the same: separate cause from correlation.

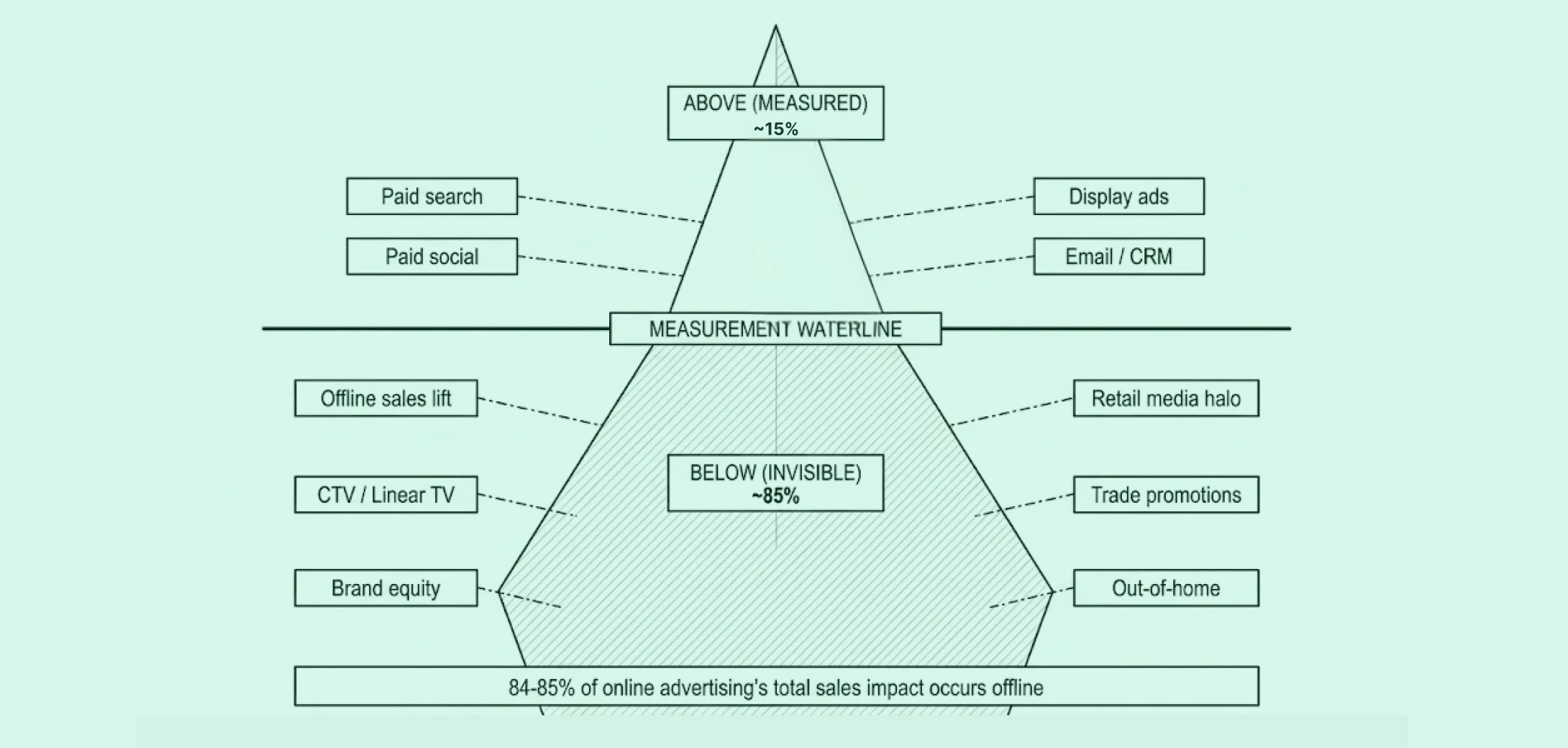

What incrementality testing does NOT do is just as important. A single experiment answers one question, in one window, for one intervention, against one outcome. It does not provide a full-funnel view of performance, nor does it fully capture long-term brand effects or account for cross-channel interactions.

This is why the most effective incrementality programs are not standalone: every experiment should return a result that makes the planning model permanently more accurate. LiftLab’s Trust Engine is built around this principle; experiment results update model coefficients automatically, so each test compounds the intelligence of every decision that follows.

How to Run Incrementality Tests: 3 Experiments Every Team Should Start With

A strong incrementality testing methodology starts with the budget question, not the test format. The 3 main types of incrementality tests are:

Geo holdout test (Go Dark)

Switchback test

Saturation (pacing) test

Geo holdout (Go Dark)

Purpose: Suppress a channel in selected markets to see whether revenue drops without it.

When to use it: Use this when you need to know whether a channel is driving true incremental revenue or simply harvesting demand you already own.

This is the cleanest answer to the question, “If we turned this off, what would happen?” It is especially useful for maintain-or-cut decisions.

One practical risk that has grown significantly in 2026 is automated budget redistribution by AI-driven bidding platforms. Performance Max and Meta Advantage+ continuously identify high-converting audiences and geographies, including markets you have designated as controls. When a platform reallocates spend to a suppressed geo, the control group is contaminated, with no visible signal in standard campaign reporting. LiftLab’s PlatformSense monitors daily changes in auction dynamics and budget redistribution patterns, flagging contamination before it corrupts the final lift calculation. Clean experiments are not just a design question; they require active monitoring throughout the test window.

Switchback test

Purpose: Alternate spend on and off across time windows instead of geographies.

When to use it: Use this for high-velocity channels like Meta or Google when a geo holdout is impractical or leakage risk is high.

Switchbacks are often the cleanest experiment design for fast-moving paid media when static geo splits are not feasible. When geography is messy, time becomes the experimental lever.

Pacing/Saturation test

Purpose: Vary spend levels across markets to build a response curve.

When to use it: Use this when you already know a channel works and now need to know where diminishing returns begin before you overfund the channel.

This is where incrementality testing stops being a measurement exercise and becomes a capital allocation tool.

With LiftLab, geo holdouts show whether a channel is incremental at BAU, switchback tests show whether that lift holds across spend-on / spend-off periods, and pacing tests show how far you can scale before returns weaken.

LiftLab brings all three designs into a unified measurement system. LiftLab’s default is Stratified Random Sampling of balanced DMAs because it delivers conservative, transparent, and finance-auditable results. Synthetic controls are a viable method in specific scenarios where randomized sampling is not feasible. Across every design, LiftLab also protects result integrity through spend contamination detection, including spillover from channels like Performance Max into suppressed geos.

How to Read Incrementality Results Without a Data Scientist (iROAS Explained)

If you are a CMO, you do not need a statistics lecture on incrementality. You need something more useful: a way to read results in business language and decide what changes next quarter.

Start with the number that matters most: incremental ROAS (iROAS), not platform ROAS. This is not the return on all revenue that happened while the campaign was live, it is the return on the revenue the campaign actually caused.

iROAS (incremental ROAS) is the return on the spend that actually caused an outcome, not the return on all spend that ran alongside it. It is the only ROAS figure that can inform a capital allocation decision with causal confidence.

Then read the confidence interval like an executive, not a statistician. It is a range of plausible outcomes. If that range includes zero, the channel may have had no measurable effect. If the entire range sits above zero, you have evidence of lift strong enough to inform action.

Did this test change what you would have spent next quarter?

That is the question most teams skip. If the answer is no, the test produced information but not a decision.

LiftLab surfaces iROAS and confidence ranges in business language, not statistical shorthand. More importantly, the result does not stay trapped in a one-off report. The Trust Engine™ feeds results directly back into the AMM (the model behind LiftLab’s planning system), tightening response curves and improving scenario planning so your next capital allocation decision is more reliable than your last one.

The practical limit of standalone iROAS is that it answers a historical question: did this channel produce incremental return in this window? The more useful question for budget planning is: what is this channel’s current marginal iROAS, the return on the next dollar, not the average return on all dollars spent? LiftLab’s Agile MMM uses experimental iROAS results to continuously update marginal return estimates by channel, so the Scenario Planner always reflects the most recent causal evidence rather than a number from last quarter’s test.

Need a complete walkthrough on how LiftLab can drive your budget decisions?

The Incrementality Trap: When Testing Becomes Theater

The biggest failure in incrementality testing for CMOs is usually not technical. It is organizational.

An incrementality test without a pre-committed decision is measurement theater. The test result should change budget allocation; if it cannot, don’t run it.

That trap shows up in three ways:

Running tests without a decision attached: First, marketing runs tests without attaching them to a budget decision. The output may be interesting, but it does not change a decision.

Treating experiments as the answer, rather than calibration: Second, teams treat the experiment as the final answer instead of the calibration input. A geo holdout on Paid Social can tell you whether Paid Social drove lift in that window but cannot tell how it changes baseline demand over time, or how the whole portfolio should rebalance. That requires recalibration with MMM.

Over-testing confident channels, under-testing uncertain ones: Third, teams over-test the channels they already trust and under-test the uncertain channels. That is backward. The right experimentation roadmap is driven by uncertainty, not comfort.

Most teams test Paid Social because it is the highest-spend channel, and they want to defend it. The channels that should be tested are the ones where the model’s coefficient uncertainty is highest, often brand, audio, or CTV, where historical spend variation has been too low to produce reliable estimates.

LiftLab closes that gap by letting the Agile MMM identify which channels have the highest uncertainty in their response estimates. Those channels become the testing roadmap. That is how you stop running experiments as side projects and start using them for capital allocation.

Incrementality Testing vs. MMM: Why the Debate Is the Wrong Frame

The Incrementality vs MMM debate misses the point.

MMM tells you how your full portfolio is performing across channels and over time. Incrementality testing tells you whether a specific intervention causes lift in a specific window. These are different jobs. The real question is not which one to use. It is whether they are connected.

That connection is now a clear marker of measurement maturity. According to BCG’s 2025 research, 40% of marketers that were surveyed said they use incrementality results to calibrate MMMs – a sign that CMOs need to start looking at incrementality and MMM together, rather than in isolation.

| Factor | MMM | Incrementality testing |

|---|---|---|

| What it measures | Full-portfolio contribution across channels. | Causal lift from a specific intervention. |

| Time horizon | Continuous and broader, often including lag and carryover. | One defined experimental window. |

| Output type | Response curves, contribution, marginal return, scenarios. | Lift, confidence range, incremental ROAS (iROAS). |

| Primary use | Ongoing capital allocation. | Causal validation and model calibration. |

| Calibration relationship | Informed by experiments over time. | Provides the causal anchors that update MMM coefficients. |

| When to question the output | When trained on visibility-biased historical budgets. | When the test window is too short to capture the full effect. |

LiftLab’s Trust Engine™ closes this loop. The model identifies uncertainty. The experiment generates causal proof. The result recalibrates the model. And your planning confidence compounds over time instead of drifting across disconnected tools.

What to Ask Your Incrementality Testing Vendor

If you are evaluating incrementality testing platforms, asking these five questions will tell you quickly whether the vendor can support proving marketing ROI to CFO standards.

Q1. How do you select geo holdout markets and can Finance audit the methodology?

You need a transparent answer, not black-box methodology.

You need a transparent answer, not vague talk about “matched markets” or proprietary selection logic.

Green flag: Stratified Random Sampling with an auditable DMA selection rationale, not a black-box synthetic control.

Q2. How do experiment results feed back into the model, or do they sit in a separate report?

If the result does not recalibrate planning, the workflow is disconnected.

Green flag: Test results update response curves, scenario ranges, or budget recommendations inside the same measurement system.

Q3. How do you detect and correct for spend contamination in suppressed geos?

If spillover is invisible, your holdout may not be valid.

Green flag: The vendor can explain how they monitor leakage, flag contamination, and account for spillover from products like Performance Max.

Q4. Can you show a test where the result changed a specific budget allocation, and by how much?

A real platform should show a decision, not just a dashboard.

Green flag: The vendor can point to a concrete reallocation outcome. For example, budget shifted, capped, or cut based on measured lift and iROAS.

Q5. What is your process for determining which channel to test next?

The best answer is model-driven prioritization, not ad hoc curiosity.

Green flag: The experimentation roadmap is driven by measurement uncertainty, spend size and the next budget decision on the calendar.

LiftLab has a verifiable answer to all five. Ask for a demonstration of each product, not a methodology deck.

Win the Budget Room

When Finance asks whether the campaign caused the result, you need more than a dashboard defense; you need a named test, a traceable causal outcome, and a model recalibrated around what the test proved. That is the version of incrementality testing for CMOs that earns a permanent seat in capital allocation and budget planning.

See how LiftLab connects incrementality tests to budget decisions.

The CMOs building a durable measurement advantage in 2026 are not running more experiments. They are running the right ones, guided by a model that tells them where uncertainty is highest, and making sure every result permanently sharpens the system that allocates the next dollar. If your incrementality program is producing receipts rather than compounding intelligence, that is a design question, not a data question.

FAQs

Incrementality testing vs A/B testing: what’s the difference?

A/B testing compares two versions of an experience. Incrementality testing measures whether marketing created net-new business outcomes that would not have happened otherwise. A/B testing improves execution; incrementality testing proves causal lift.

How much budget do I need to run a valid incrementality test?

There is no universal minimum. Required budget depends on baseline volume, expected lift, market noise, and how cleanly you can separate test and control. Teams often ask this question because they are trying to justify the test overhead. A more useful question is whether the revenue at stake in the allocation decision the test will inform justifies the spend and time required to run it. The more useful question is whether the decision value justifies the test.

How long does an incrementality test take?

Most tests run for several weeks, not several days. You need enough time to establish a baseline, run the intervention, and measure the outcome. The right duration is driven by signal quality, not convenience.

Can incrementality testing measure brand and upper-funnel channels?

Yes, especially through geo holdouts, switchbacks, and pacing tests where attribution is weak. But the test should be used to calibrate MMM, not replace it, if you want to understand long-term brand effects and cross-channel effects.

What happens when incrementality test results contradict MMM outputs?

That is not a failure. It is a signal. The experiment gives local causal truth; MMM gives you portfolio context. When they disagree, you investigate the gap and recalibrate the model. That is how the system gets smarter over time. LiftLab’s Trust Engine is specifically designed to resolve this disagreement systematically, experiment results update model coefficients, so the contradiction becomes the mechanism by which the system gets more accurate, not a reason to distrust either method.