Executive Summary

In 2026, measurements that live inside a marketing dashboard are no longer sufficient. Enterprise leaders need MMM platforms that function as capital-allocation engines, systems capable of earning a seat at the boardroom table, not just a line in the quarterly marketing review.

Each quarter, enterprise marketing teams walk into budget reviews armed with dashboards, attribution summaries, and efficiency metrics. The numbers often look fine until the CFO asks a harder question: What actually drove the result? How confident are we in it? What should change next quarter? That moment exposes the real problem.

This is not a data issue. It is a measurement architecture issue.

Gartner reports that marketing budgets remain flat at 7.7% of company revenue, and 59% of CMOs report insufficient budget to execute their strategy.

But the deeper problem isn’t the budget ceiling; it’s the inability to clearly demonstrate impact to defend it.

A Gartner survey found that 84% of companies are stuck in a brand measurement doom loop, where underfunded measurement leads to unclear impact, rising skepticism, and tighter budgets. Companies caught in that cycle are half as likely to exceed organizational growth targets as those that can successfully generate return on marketing.

It is no longer just a measurement conversation. It is a governance conversation. MMM platforms must not only explain past performance — they must guide future investment.

This guide evaluates leading platforms through a single lens: whether they can improve capital allocation. It covers the five capabilities that matter most in 2026, compares LiftLab, Measured, Recast, Haus, Mutinex, and Analytic Partners, includes a build-vs-buy view on Google Meridian and Meta Robyn, and closes with a weighted RFP scorecard for buyers evaluating vendors on the capabilities that separate genuine planning systems from sophisticated reporting tools.

What is a Marketing Mix Modeling Platform?

A marketing mix modeling platform is a measurement and planning system that uses statistical models to estimate how different marketing channels influence business outcomes, such as revenue, conversions, or profit. Unlike attribution tools, MMM platforms rely on aggregated data, making them better suited for full-funnel marketing measurement and guiding capital allocation decisions.

The 2026 MMM Landscape: Why the Old Playbook No Longer Works

The Paradox of Growth

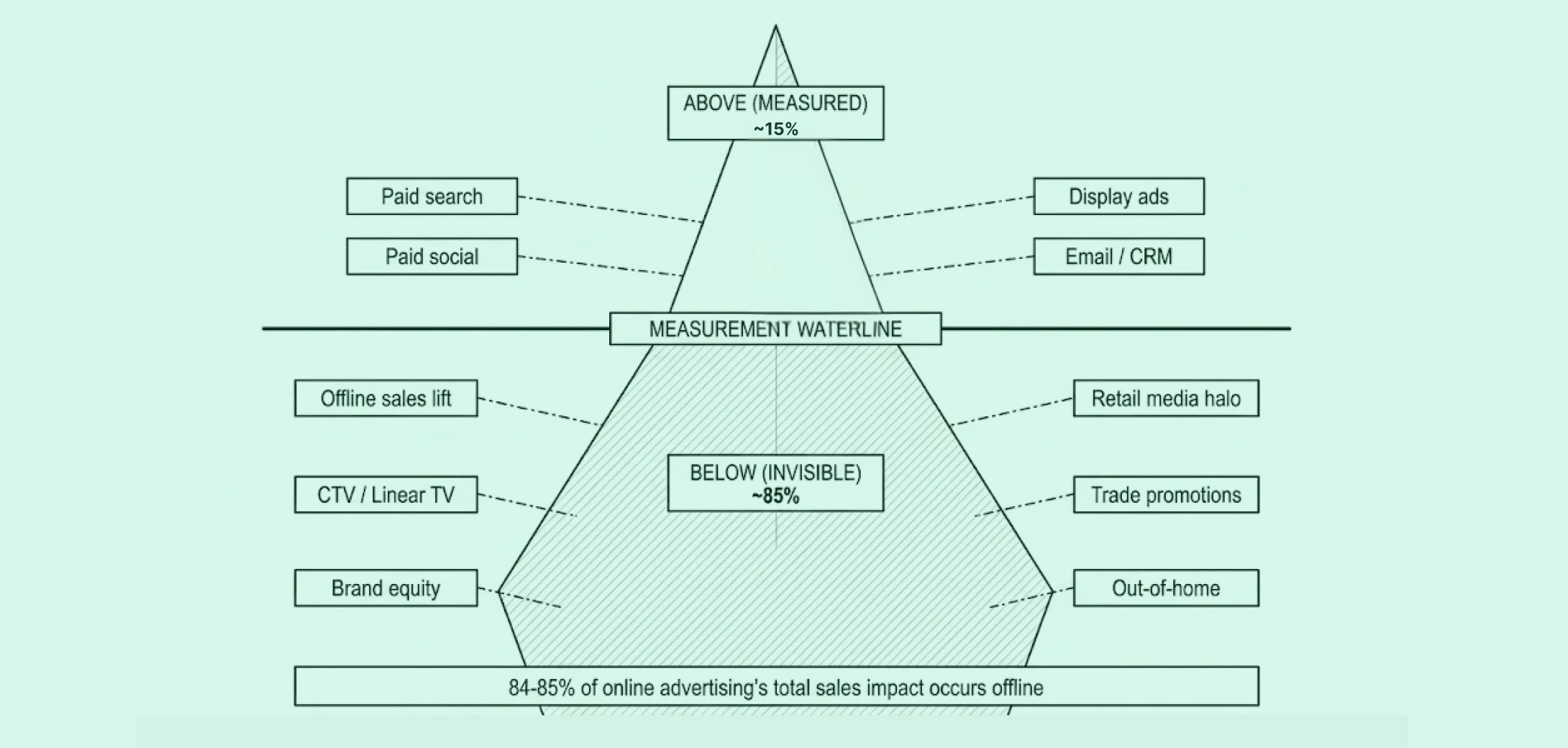

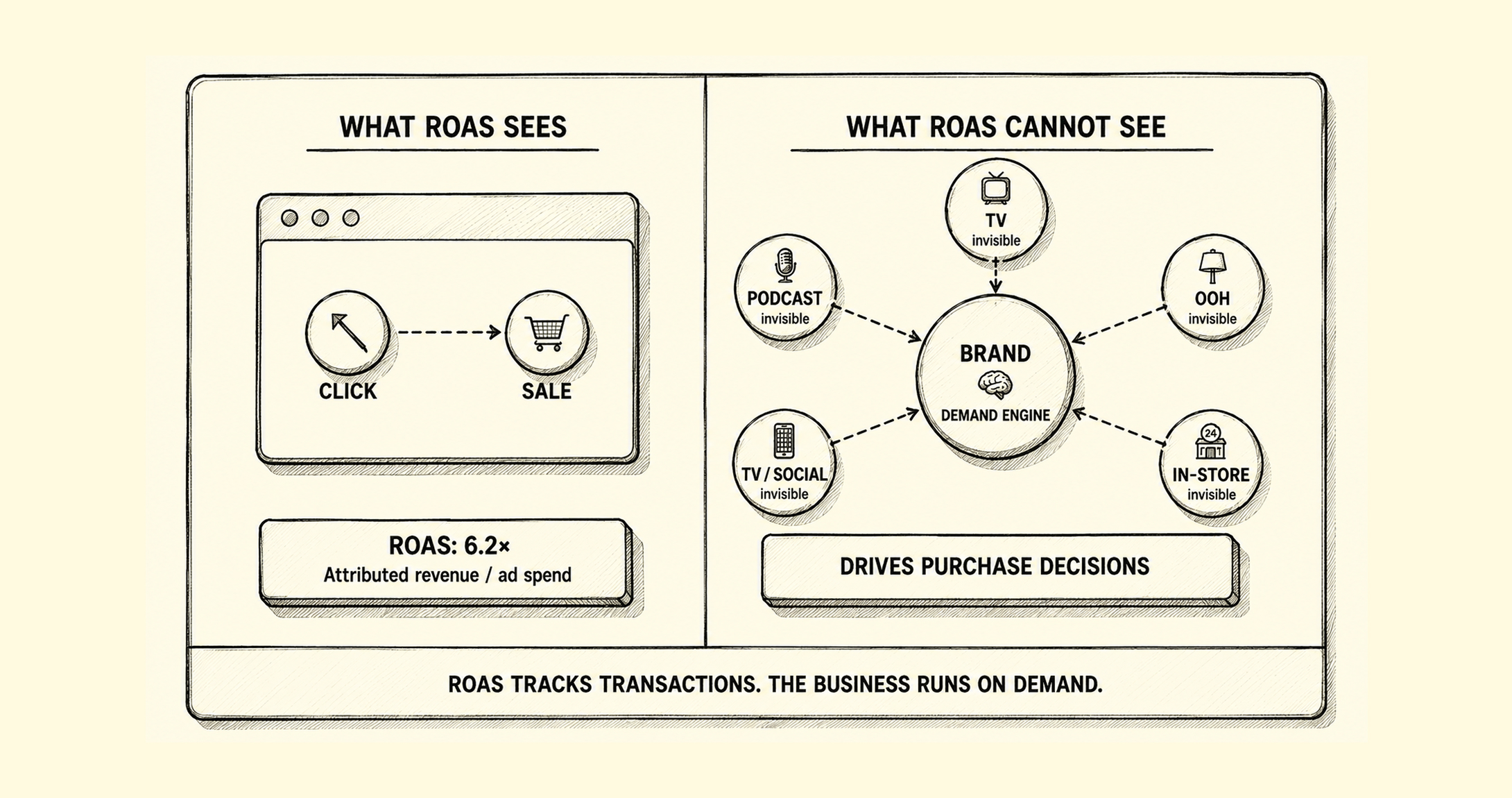

The old playbook rewards demand capture and starves demand creation. Search and retargeting look efficient because they harvest intent that brand investment built, but measurement credits the harvester and ignores the farmer. As brand spend is cut and performance spend grows, baseline demand erodes, CAC rises, and growth stalls. It is a critical error to mistake exposure for persuasion.

For the full framework on how this plays out on the P&L — and how to break the cycle — read: From Measurement to Capital Allocation →The Measurement Confidence Crisis

The defining issue in 2026 is not a shortage of data; it is a widening gap between what platform dashboards report and what modeled, causal measurement reveals. Most marketers now run the same spend through multiple reporting systems and receive conflicting signals: Ad platform-reported ROAS that does not reconcile with MMM contribution estimates, incrementality results that contradict last-touch attribution, and holdout tests that challenge both. The result is a credibility problem. Measurement exists, but confidence in it does not, and that gap is exactly what collapses under a CFO’s first hard question.

The Lagging Intelligence Crisis

Traditional MMM approaches often arrive with a structural flaw: by the time the insight is ready, the market has already moved. IAB’s guidance on modernizing MMM makes the implications clear: faster refreshes, broader inputs, and direct integration into planning are now table stakes for enterprise measurement. If the model becomes useful only after the planning window closes, it is no longer a strategic input. It is a backward-looking artifact.

The Real Destination: Capital Governance

The two most expensive measurement failures in 2026 are double-counting, where multiple attribution systems claim credit for the same conversion, and ignoring incrementality, where budget decisions are made on correlated data rather than causal evidence. Both are architectural problems, not data problems. MMM is no longer just a reporting layer; it is a capital governance layer that resolves these failures by establishing a single, model-grounded view of what spend actually caused.

Where Are You Today? The Marketing Measurement Maturity Model

Stage 1: Reporting (The Dashboard Era)

Teams rely on platform-reported ROAS, attribution tools, and dashboards. The problem is not the reporting itself; it is the underlying metrics. Platform-reported numbers are directional indicators measured within walled gardens, not true performance numbers.

Meta’s ROAS and Google’s ROAS are called attributed but a more accurate name would be “influenced”. That error means that they can’t be compared to one another and can only be tracked over time within the same platform. Budget decisions built on cross-channel comparisons of these metrics are built on a flawed premise.

Stage 2: Measurement (The Incrementality Era)

MMM and experiments improve causal understanding, but insights remain retrospective and siloed. The output does not integrate cleanly into planning cycles or finance reviews.

Stage 3: Capital Allocation (The Econometric Era)

MMM, experimentation, and financial planning are unified into a single decision-making system. Teams can answer clearly: What is the marginal ROI of the next dollar? Where are diminishing returns showing up? What is the optimal executable budget under real constraints?

Most enterprise teams today operate between Stage 1 and Stage 2. The platforms in this guide differ significantly in how far they take buyers toward Stage 3.

The 5 Non-Negotiable Capabilities for 2026 Marketing Mix Modeling Platforms

These five capabilities define the difference between a measurement tool and a capital allocation system. They are presented as buyer requirements, not as a feature checklist for any single vendor. Where relevant, the discussion notes how different platforms approach each capability, since the right answer for your team depends on your operating model.

Daily Agility Without Sacrificing Stability: One of the most important evaluation criteria in any MMM platform assessment is the ability to respond to market change without exposing the model to noise. This is the stability-responsiveness trade-off: too stable and the model become stale; too responsive, and it chases short-term noise. The right architecture separates structural response curves, which should be stable, from fast-moving effectiveness signals, which should update frequently.

Platforms handle this differently. LiftLab’s PlatformSense incorporates daily ad platform signals as effectiveness modifiers without re-estimating the full model each day. Recast uses weekly re-estimation cycles within a sliding data window designed to maintain stability while gradually adapting to changes in advertising response. Measured and Analytic Partners operate on managed refresh cadences tuned to client reporting cycles.

When evaluating vendors on this dimension, ask specifically how the platform separates durable channel response from transient platform noise, and request a demonstration with real channel data.Transparent, Auditable Incrementality Calibration: The strongest MMM platforms show how their confidence was built, not just what the output says. Explainability is what turns measurement into governance, and it is the requirement that turns a marketing-facing tool into one Finance and Legal will trust.

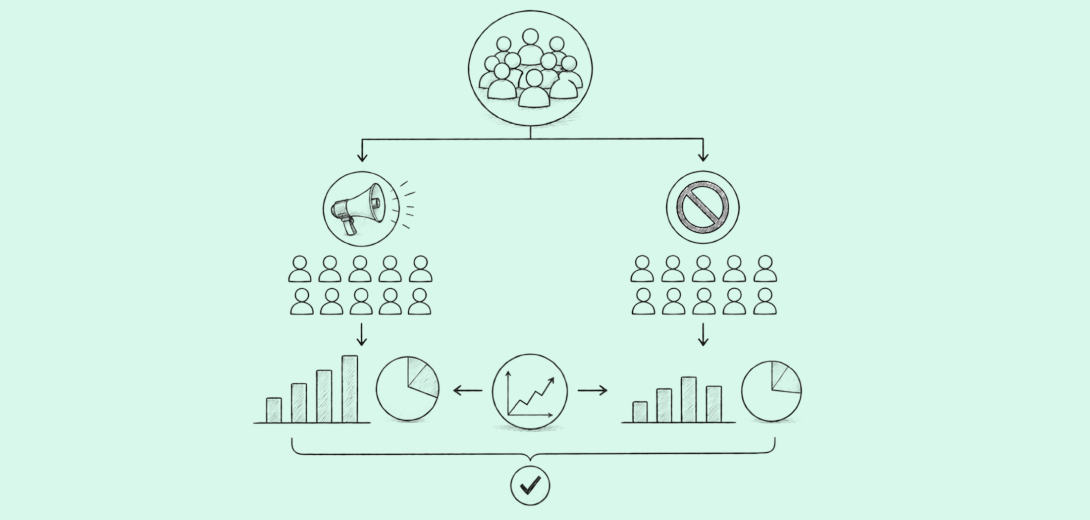

Different platforms approach calibration differently. Measured and Haus center their architectures on causal experiments, with geo-lift testing as a primary calibration mechanism.

Recast’s Bayesian methodology produces uncertainty ranges around channel estimates, making the model’s confidence visible. LiftLab’s Trust Engine™ closes the loop between experimentation and the MMM itself; experiments calibrate the model, and model outputs inform better experiment design.

When evaluating vendors, ask them to show how a specific geo-test result changed a model coefficient and what that meant for a subsequent budget decision. A strong answer will be specific and traceable.Diminishing Returns and Marginal ROI in Business Language: Average ROI tells you how the spend has performed to date. Marginal ROI tells you what the next dollar is likely to do. Response curves and saturation points are core analytical outputs for any platform making budget recommendations, but the differentiator is whether those outputs are expressed in analyst terms or in language a CFO can act on when reviewing a budget proposal.

Recast, Measured, and Analytic Partners all produce marginal ROI and response curve outputs. The question to ask in any vendor demonstration is not whether the platform has these features, but how they surface in a planning workflow. Can a non-technical stakeholder read a saturation curve and understand what it means for the next quarter’s allocation? Can the system generate a recommendation that finance can review without a modeling team present to interpret it?

LiftLab’s approach makes channel response curves, saturation points, and marginal ROI visible directly in budget-planning outputs, framed in terms of business impact rather than model parameters.Constraint-Aware Scenario Planning: A budget recommendation is only useful if it respects real operating constraints. Committed TV upfronts, platform inventory minimums, channel-level guardrails, pacing rules, and cash-flow timing all affect what a marketing team can actually execute, yet most theoretical optimization frameworks ignore them.

This is an area where platforms diverge significantly. Analytic Partners has deep scenario planning capabilities developed over years of enterprise engagements. Measured offers planning and reporting tools with strong incrementality calibration backing them.

Platforms approach this differently. Analytic Partners and Measured offer scenario planning with some constraint inputs. The distinction with LiftLab’s Scenario Planner is integration depth: committed spend floors, platform minimums, pacing rules, and saturation effects are required inputs before the optimization runs, not parameters you adjust manually after reviewing the output.

The question to ask any vendor is not whether they support constraints, but whether the optimiser treats them as hard requirements or post-hoc filters.Long-Term Brand Equity Quantification: Long-term brand effects remain among the most underrepresented dimensions in MMM implementation. Many models rely on observation windows that are too short to capture lagged brand responses, thereby systematically pulling budget toward demand capture and away from demand creation. Over time, this creates the doom loop that Gartner has documented: underfunded brand measurement leads to unclear brand impact, which leads to skepticism, which leads to tighter brand budgets, which weakens long-term pricing power and CAC.

Long-term brand effects are one of the most methodologically difficult problems in MMM. The core challenge, flagged by leading academic researchers in the field, is that regression techniques applied to a few years of single-firm data do not have sufficient statistical power to reliably isolate long-run advertising effects. The only approaches with academic backing combine data from many advertisers into meta-analytic frameworks, using cross-advertiser patterns to calibrate what individual firm data cannot reveal on its own.

This is why most platforms default to short ad-stock windows or treat long-term effects as a footnote rather than a planning input. LiftLab’s long-term multiplier framework is grounded in cross-advertiser evidence rather than single-firm regression, treating long-run brand effects as a calibrated input to capital allocation rather than an estimation from observational data alone.

When evaluating vendors on this dimension, ask specifically how long-term brand carryover is modeled, what the assumed decay rate is, and whether that assumption is calibrated to your category or is a default parameter.

Comparing the Top MMM Platforms in 2026

This comparison is structured to help enterprise leaders answer one baseline question: which platform can move our team from measurement to budget action?

Scoring key:

Scores reflect publicly available information as of April 2026. Buyers should verify current capabilities directly with each vendor.

Note: Weights in the RFP scorecard below should be adjusted to your team’s specific measurement maturity and primary use case.

*Note – The distinction being scored is whether experiment results update model coefficients automatically within the platform workflow, or whether they require a manual re-modeling step by an analyst. Integrated calibration compounds accuracy over time; manual calibration is dependent on analyst capacity and cycle time.

** Scenario planning is the ability to model ‘what if’ budget shifts and forecast outcomes. Constraint-aware planning is the narrower, more operationally critical capability: whether the optimizer accepts hard constraints — locked upfronts, minimum floors, maximum ceilings — before generating its recommendation, rather than producing a theoretically optimal plan that then has to be manually adjusted for real-world constraints.

LiftLab: The Enterprise Capital Allocation Platform

Best for: Enterprise full-funnel leaders, CMOs, and CFOs who need measurement to function as a financial governance system, not just an optimizer layer.

Strengths: LiftLab is built as a capital allocation engine rather than a measurement reporting tool. Its architecture integrates four connected layers: Agile MMM for continuous measurement, the Trust Engine™ for experiment calibration, PlatformSense for daily channel intelligence, and a constraint-aware Scenario Planner, into one system whose outputs Finance can approve and Marketing can execute.

Considerations: LiftLab’s client roster is more selective than some larger peers, and its published case study depth reflects an earlier stage of market scale. Buyers should expect a close, consultative implementation partnership rather than a self-serve onboarding experience. For enterprise teams with complex media environments and specific planning requirements, that level of implementation depth is appropriate, but it is worth clarifying the scope and timeline expectations during the evaluation process.

Want to understand how LiftLab, one of 2026’s top MMM platforms, can be tailored to your channel mix and planning constraints? Get a live walkthrough.

Measured: Best for Causal Incrementality at Scale

Best for: Enterprise brands moving beyond MTA that need rigorous, incrementality-calibrated MMM with strong methodological transparency.

Strengths: Measured has invested in model refresh cadence, tactic-level input control, and transparency into model priors and methodology. It represents one of the more credible options for buyers prioritizing defensible causal measurement with enterprise usability and a strong experiment infrastructure behind it.

Considerations: The architecture is lighter on constraint-aware planning and on long-term brand effects than platforms that have built out full planning layers. Planning questions such as “How do we generate a fully executable budget under committed upfront constraints?” are not its primary design target.

Recast: Best for Model Transparency and Statistical Visibility

Best for: Data-heavy brands with strong internal analytics teams that want uncertainty-aware modeling and probabilistic forecasts.

Strengths: Recast’s clearest differentiator is model visibility, its live accuracy dashboards and weekly out-of-sample validation reports make the statistical machinery observable in a way that most platforms do not attempt. For teams that want to inspect forecast confidence intervals and uncertainty ranges directly, Recast surfaces that information in-platform..

Considerations: The trade-off is planning breadth and executive accessibility. Recast is more analyst-friendly than executive-friendly, and its scope on constraint-aware planning is lighter. Teams without internal analytics capacity may find the statistical depth a barrier rather than an asset.

Haus: Best for On-Demand Incrementality Testing

Best for: DTC and growth-stage brands that need fast, statistically rigorous geo-experiments without a large internal analytics team.

Strengths: Haus demonstrates genuine strength in its synthetic control methodology and experimental design tooling, making it relevant to any discussion of causal validation and incrementality measurement. The platform leans clearly into test-driven confidence and causal clarity.

Considerations: Haus reads more as an experimentation-centric measurement layer than a fully unified planning system. Buyers focused primarily on rapid incrementality validation will find it well-suited to their needs; the gap matters most for teams seeking continuous, model-driven budget reallocation rather than on-demand testing.

Mutinex: Agentic MMM Platform

Best for: Organizations interested in always-on measurement with an agentic operating layer and workflow automation built into the product experience.

Strengths: The more important evaluation question for buyers is the modeling methodology underlying the agentic layer. Mutinex’s public documentation focuses on product workflow and interface rather than model architecture, prior assumptions, or validation methodology. Buyers evaluating Mutinex should specifically ask how the underlying model is built, validated, and refreshed, and request documentation of the statistical approach before assessing the front-end capabilities.

Considerations: Mutinex’s public documentation on long-term brand equity modeling and constraint-based planning depth is limited relative to more established platforms. Buyers with large procurement teams requiring a detailed methodology review should plan for a deeper technical evaluation. Some of the capabilities relevant to this guide are less extensively documented than those of vendors with longer enterprise track records.

Analytic Partners: High-Touch Enterprise Commercial Analytics

Best for: Large enterprises that want a high-touch consultancy model, cross-market depth, and scenario planning grounded in extensive historical benchmarks.

Strengths: Analytic Partners brings strong scenario planning capabilities, broad commercial analytics framing across markets and channels, and the enterprise buyer familiarity that comes from years of working at the intersection of marketing and finance. Its cross-market data depth is a genuine differentiator for global brands.

Considerations: That depth comes with a heavier services posture and a slower operating cadence than buyers seeking a more agile, continuously refreshed planning system. Teams that need weekly or daily insight velocity may find the model-delivery rhythm a mismatch.

Build vs. Buy: Google Meridian and Meta Robyn

Google Meridian and Meta Robyn are legitimate open-source options for organizations with mature data science teams and the capacity to build, maintain, and interpret their own models. Both are well-documented and backed by active developer communities.

The more underestimated challenge is not pipeline infrastructure; it is the modeling expertise required to make the hundreds of specification choices that determine whether a model reflects your business reality or just fits the data.

Open-source frameworks like Meridian and Robyn come with default prior values and structural assumptions that are not always visible to the team using them, and that can materially affect the validity of outputs. Getting those choices right requires deep experience in econometrics and MMM specifically, not just data engineering. For most enterprise teams, the question is not whether they can run Meridian, it is whether they have the modeling expertise to know when its outputs should be trusted and when they should not..

The RFP Evaluation Scorecard: Questions That Separate Vendors

A note on weights: The weights below reflect a baseline enterprise buyer profile focused on capital allocation and planning. Adjust them to reflect your team’s measurement maturity, primary use case, and organizational priorities. A team at Stage 2 maturity will weigh incrementality calibration differently than one already operating at Stage 3.

| Evaluation Area | Weight | What You’re Really Testing | What a Strong Answer Looks Like |

|---|---|---|---|

| Business Representativeness & Adoption Readiness | 15% | Can the model accurately represent your specific business, your channel mix, offline and online revenue split, promotional calendar, and conversion funnel, while remaining interpretable enough for marketing, finance, and operations teams to trust and act on without a data scientist present? | Shows how the platform has modeled businesses with similar complexity to yours. Demonstrates how non-technical stakeholders interact with outputs. Is honest about the trade-off between model complexity and organizational adoption and can explain how their approach avoids overfitting to historical noise. |

| Architecture & Signal Handling | 10% | Is responsiveness to recent market changes achieved by frequently re-estimating long-term averages, which risks instability, or by incorporating additional real-time data sources as effectiveness modifiers, keeping the structural model stable while reflecting current platform conditions? | Clearly distinguishes the structural model from the fast-signal layer. Name the specific data sources used to achieve responsiveness and explain why the approach does not introduce noise into long-term response curves. Avoids vague “AI-powered” or “continuous learning” language |

| Refresh Cadence & Responsiveness | 10% | How quickly does the system reflect meaningful market shifts? | Durable changes in platform economics are reflected quickly, with disciplined update logic that separates noise from signal. |

| Incrementality Calibration | 12% | Are experiments being used as credible causal calibration, and is the testing methodology transparent? | Shows how experiments calibrate the model and makes the methodology available for Shows how the platform has modeled businesses with similar complexity to yours. Demonstrates how non-technical stakeholders interact with outputs. Is honest about the trade-off between model complexity and organizational adoption, and can explain how their approach avoids overfitting to his stakeholder review. |

| Methodology Transparency & Auditability | 10% | Can the methodology survive scrutiny from Finance, Legal, or technical teams? | Provides clear methodological documentation and shows how the process can be independently reviewed. |

| Marginal ROI & Diminishing Returns | 10% | Can the platform help allocate the next dollar, not just explain the last one? | Shows channel-level response curves, saturation points, and clear marginal ROI outputs in accessible language. |

| Constraint-Aware Scenario Planning | 12% | Can the system produce executable plans, not theoretical ones? | Models committed spend floors, platform minimums, pacing rules, and saturation effects within the same optimization frame. |

| Long-Term Brand Effects | 8% | Can the platform quantify brand compounding and separate it from short-term activation? | Explains how long-term brand effects are modeled, what the decay assumption is, and how it feeds into capital allocation. |

| Unified Measurement Workflow | 8% | Are MMM, attribution, and experimentation connected in one decision system? | Shows how conflicting signals are reconciled and translated into action without requiring manual interpretation. |

| Finance Integration & CMO-CFO Alignment | 10% | Can outputs be used in budgeting, planning, and board-level discussions? | Examples of results expressed in terms of revenue, margin, risk, uncertainty, and capital allocation trade-offs. |

| Implementation Realism & Total Cost of Ownership | 5% | Is the solution practical to adopt and sustain? | Is honest about implementation effort, services requirements, experimentation overhead, and the true total cost of ownership. |

What to Ask Beyond the Scorecard

How do you ensure model stability over time while remaining responsive to recent changes in media performance? Specifically, is your responsiveness achieved by frequently re-estimating long-term averages, or by incorporating additional real-time data sources as effectiveness modifiers? Walk us through the architecture. Show me how an experiment’s results feed back into the MMM. Can ,ou demonstrate a case where a geo-test changed a model coefficient and what it meant for a budget decision?

Run a constraint-aware scenario and show how a committed TV upfront changes the allocation output.

How do you model long-term brand effects, and how does that show up in the final budget recommendation?

What happens when the MMM and an incrementality test disagree?

Frequently Asked Questions

What is the difference between MMM and incrementality testing?

MMM estimates contribution across the full portfolio, while <a href=”https://liftlab.com/platform/incrementality-testing-engine/”>incrementality testing</a> isolates causal lift for a specific intervention. The strongest modern systems use experiments to calibrate MMM and use MMM to generalize beyond any single test, making them complementary rather than competing approaches.

What is the difference between MMM and multi-touch attribution?

MMM is more resilient in a privacy-constrained environment because it does not depend on user-level tracking. Attribution can still be useful for in-channel optimization, but the strongest measurement architectures use both, with MMM providing the portfolio-level view that attribution cannot: incrementality-grounded channel contribution, marginal ROI at current spend levels, and diminishing returns curves that tell you where the next dollar should go, not just where the last dollar landed.

What is the stability-responsiveness trade-off?

It is the challenge of ensuring the model is stable enough to trust and responsive enough to reflect real market change. Too stable, and it becomes stale. Too responsive, and it chases noise. The most sophisticated platforms address this by separating structural response curves from fast-moving signal detection, delivering daily intelligence without daily re-estimation.

Can an MMM platform bridge the gap between Marketing and Finance?

Yes, but only when the output is framed in finance-ready language: capital allocation trade-offs, diminishing returns, marginal ROI, uncertainty ranges, and executable constraints. A model that produces technically accurate results in analyst language is not the same as one that produces results a CFO can act on.

84% of Companies Are Still in the Measurement Doom Loop

Breaking out doesn’t mean measuring more. It means measuring in a way that Finance can trust and Marketing can act on.

Get a live walkthrough of one of the top MMM platforms in 2026, LiftLab’s Trust Engine™, PlatformSense, and Scenario Planner, configured around your channel mix and planning constraints.

Access iROAS benchmarks, media mix shifts, and CPM trends across six categories to see how your spend efficiency compares.