Strategic Takeaways

Most marketing mix models (MMM) are built around short observation windows, which means they structurally miss 60–120-day brand effects. When long-term impact cannot be measured with statistical confidence, professional convention defaults it to zero. Zero is treated as fact by the optimization engine, not as an absence of evidence.

Research shows long-term advertising effects are typically 2 to 2.5 times larger than short-term effects. Defaulting to zero systematically reallocates budget away from demand creation, and the consequences (rising CAC, plateauing new customer volume, softening conversion rates) are often already visible before anyone connects them to the measurement gap.

A better approach replaces the zero assumption with evidence-based multipliers and incorporates NPV of future returns directly into optimization.

The Meeting Where Brand Lost

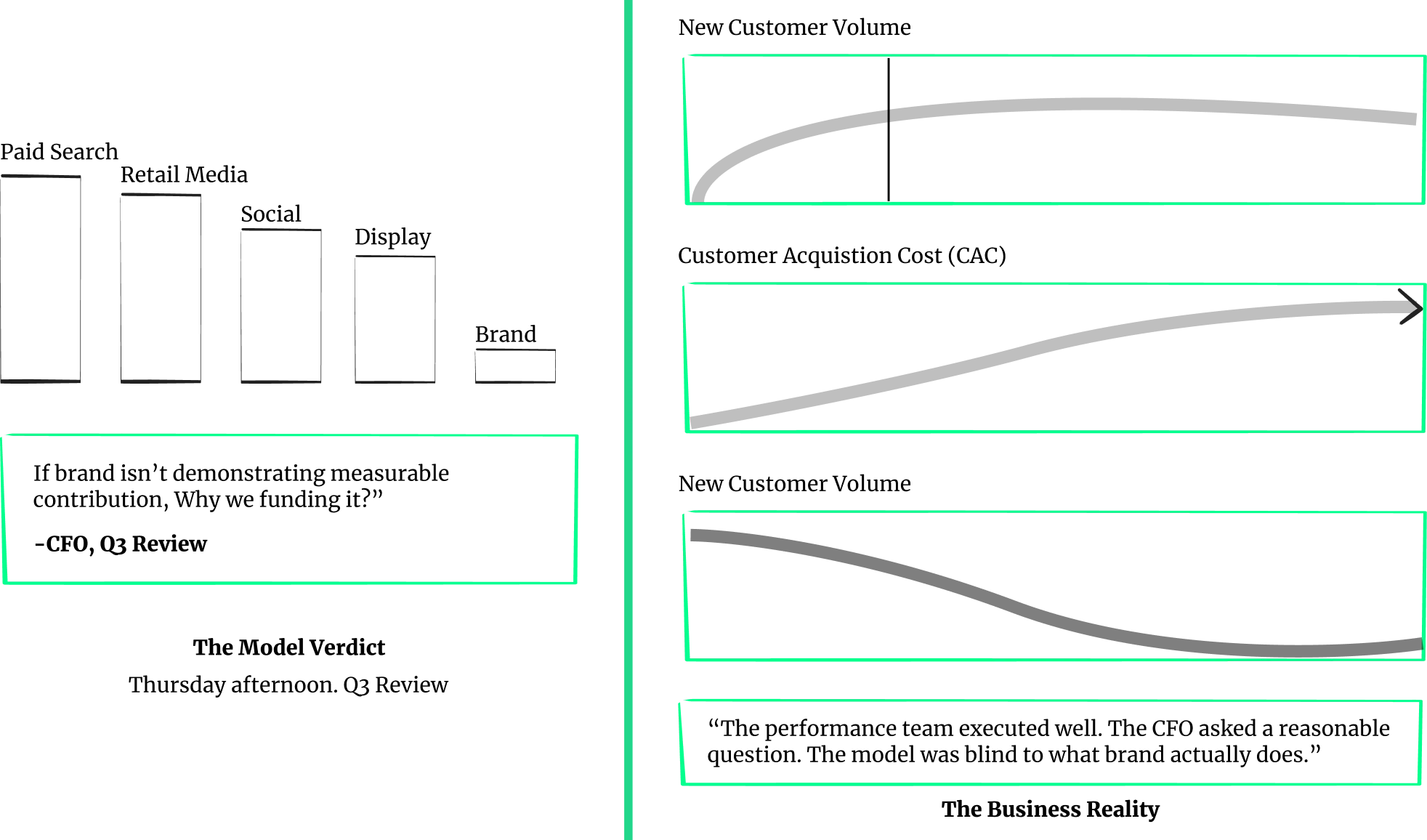

It is a mid-quarter review. Performance channels are looking strong. Paid search ROAS is up. Retail media is delivering. And sitting near the bottom of the MMM efficiency rankings, where it has been for two quarters running, is the brand. The CFO asks: “If a brand isn’t demonstrating measurable contribution in the model, why are we funding it?” The model agrees. Brand takes the cut. Performance gets the budget.

Six months later, something shifts. New customer volume plateaus. CAC starts climbing in ways no one can cleanly attribute to any single decision. Store traffic softens quietly in markets where brand investment was pulled. Growth has stalled in a way that is hard to explain and expensive to reverse. The performance team executed well. The CFO asked a reasonable question. The MMM gave a technically accurate answer. The problem is that the model was blind to a substantial share of long-term brand effects, and every budget decision that followed inherited that blind spot.

Quick Definitions

What is MMM?

Marketing Mix Modeling (MMM) is a statistical method that quantifies how different marketing channels contribute to sales, helping brands allocate budget based on measured impact.

What is an “Observation Window”?

The observation window is the time period a model looks back to measure advertising impact. Most MMMs are built around short windows, which means effects that take weeks or months to surface are structurally invisible to the model.

What are “Long-Term Effects”?

Long-term effects are the revenue impact that advertising generates beyond the immediate campaign period, typically through a 60-to-120-day lag, as awareness and consideration slowly build into purchase intent and demand.

Why Do Models Default to Zero?

When a model cannot find a statistically significant signal, professional convention is to set that effect to zero rather than estimate it. This is methodologically cautious but practically costly. Zero is treated as fact by the optimization engine, not as an absence of evidence.

Why MMM Defaults Long-Term Brand Effects to ZERO

Here is the reframe that most measurement conversations never reach. Many MMM implementations are technically sound. The issue is that they were never designed to observe what brand advertising does over a multi-month time horizon, and a model that cannot observe something will, by professional convention, treat its contribution as zero. Performance marketing captures existing demand. Brand marketing primes future demand among consumers not ready to buy today but who will be at some point, and that slow-moving behavioral change is nearly impossible to isolate in a single firm’s noisy weekly data.

As Dirk Beyer, Chief Data Scientist at LiftLab, framed it in a recent webinar: long-term effects are real and important to account for from a practitioner’s perspective, yet difficult to measure using individual-firm data.

Many marketing mix models are missing those kinds of effects. That data is very noisy, and it’s a slow-moving signal.

When the model cannot find the signal with confidence, the professional default is zero. And as Dirk put it directly:

Statisticians tend to set things to zero that cannot be measured with good significance. Zero is probably the worst number you can use here. Anything that is coming from structural reasoning, from comparison to other companies in similar fields, is probably a better starting point than zero.

Consider what that default actually costs. Experimental and econometric research shows that advertising effects extend beyond the short windows most MMMs measure. Short-term lift is not the full contribution. As Dr. Koen Pauwels noted:

Long-term effects build on top of short-term effects. If there is no short-term effect, a long-term effect is unlikely. But when there is impact, the total cumulative effect is larger than what you see in the short run.

Setting long-term impact to zero is not neutral. It removes future value from the objective function and systematically reallocates budget away from demand creation. Performance channels then recycle the same in-market audiences. CAC rises. Growth slows. The model reports efficiency while the foundation of future growth weakens. Brand investment also improves the performance of the brand. Excluding that mechanism structurally biases optimization toward the short-term.

Signs Your Model Is Missing Long-Term Impact

The symptoms of long-term underinvestment are often already visible, not connected to their underlying cause. Your MMM is probably undercounting long-term brand impact if:

Brand cuts improve short-term ROI but hurt conversion rates 60–120 days later.

Paid search/retail media ROAS is stable, but new customer volume plateaus.

Creative reach is high, but MMM says brand channels are “inefficient.”

CAC is creeping up while the spend mix skews lower funnel.

Store traffic lifts show up in geo patterns but not in weekly MMM outcomes.

These are not channel failures but measurement artifacts, and the distinction matters for every budget conversation before your next planning cycle.

A quick audit can tell you whether your model is structurally equipped to see what it is missing. Ask these questions:

What observation window does your MMM effectively credit, and does it extend beyond the immediate campaign period?

Does it include carryover effects beyond the short term, or does it treat delayed impact as zero?

Do you validate long-term effects with geo tests or holdout experiments?

Do you quantify a brand’s contribution to performance efficiency and the lift it creates in paid search, retail media, and conversion rates?

Does your optimization objective account for current profit and expected profit later, or only for the immediate return?

If most of these answers are unclear or no, the signals above are not anomalies. They may reflect a model optimized for short-term visibility rather than full economic impact.

What Research Says: The 2-2.5x Long–Term Multiplier

Across both controlled experiments and econometric studies spanning multiple industries, the evidence points to a consistent finding: long-term advertising effects are approximately 2 to 2.5 times those of short-term effects.

Between 2 to 2.5 is a very typical average multiplier, found both in experiments and econometric studies.

The multiplier is not a fixed number applied uniformly across all brands. It varies by business, product, and target audience, including factors such as brand age, the nature of the category, and the type of advertising deployed. What this means in practice is that the 2 to 2.5x figure represents a research-backed starting point, not a one-size-fits-all answer. The appropriate multiplier for any brand is calibrated to its specific situation.

Critically, long-term effects build on top of short-term ones, which prevents this framework from becoming a justification for funding brand advertising that does not work.

The conventional workaround has been adstock. Dirk is precise about where it earns its place:

AdStock is a relatively good tool to deal with short-term effects, because even short-term effects don’t happen the same day.

But extended further, the precision collapses.

Whenever we tried to do adstock effects that try to apportion things in the very distant future, it becomes a crapshoot to pick the right numbers.

Athena Dai, Head of Audience and Marketing Data Science at Quicken, confirmed this from building in-house MMMs:

By relying on short-term effects alone, we may run the risk of grossly undervaluing some other channels.

A Practical Fix: Using Evidence-Based Priors + NPV in Optimization

The better foundation combines cross-industry empirical research with reasoning calibrated to each brand’s situation. The 2 to 2.5x multiplier is an evidence-based prior customized by brand age, target population, and advertising type, then applied to measured short-term effects to produce the net present value of future advertising returns. It is validated through decades of controlled experiments and econometric studies across industries. The prior is not an assumption pulled from thin air; it is the convergence point of the most robust body of research on advertising effectiveness. As Dirk explained:

If we have both the short-term effects and the net present value of the future effects, we can actually put both of them in the objective function of the optimization. The profit we are getting now plus the profit we expect to get later.

The result, in Dirk’s words, “does not penalize those channels that might have a higher long-term multiplier but see lower short-term effects,” a direct outcome of LiftLab’s real-time optimization approach.

Athena described the shift at Quicken. Before a science-backed framework existed, her team had no defensible way to prove that short-term-only measurement was undervaluing certain channels. With long-term effects incorporated:

It gives us a better handle on understanding the long-term attributed value of other channels, and a more credible way for me and my team to justify our recommendations to the business.

That is the real outcome. Not a cleaner model output. A more credible conversation with the CFO, grounded in established advertising effectiveness research rather than instinct and advocacy.

Experimental and econometric studies point to the same structural gap. Incorporating long-term effects into optimization closes it. This is exactly what a demo shows: how it reshapes your objective function.

What Your Next Planning Cycle Will Cost You

Brand effects typically take 60 to 90 days to surface in observable business metrics. Your MMM runs weekly. By the time a brand budget reallocation becomes visible in the data, the next planning cycle has already locked in the same assumptions that caused the problem. What the model is not accounting for is long-term returns written out of the objective function each cycle, because it has no architecture to capture them.

That is not a rounding error. It is a silent misallocation that compounds as long as the measurement gap remains open. The brands that close it this cycle will build the consumer consideration that determines whether their performance marketing works two years from now. The ones that do not will keep running efficient models on shrinking foundation.

Frequently Asked Questions

Why does marketing mix modeling undervalue brand advertising?

Write your answer here.MMM measures effects within short observation windows, typically days to a few weeks. Brand advertising builds awareness over months, a signal too slow-moving to isolate in a single firm’s weekly data. When the model cannot find the signal, it defaults to zero and allocates budget as if brand’s long-term contribution does not exist.

What are long-term brand effects and why do they matter for budget decisions?

Long-term brand effects are the revenue impact advertising generates beyond the immediate campaign window through sustained awareness and purchase intent. Across controlled experiments and econometric studies spanning multiple industries, these effects are frequently observed at approximately two to two and a half times the short-term effect. However, the exact magnitude varies by category, brand maturity, and advertising type.

Why is setting long-term brand effects to zero in MMM so damaging?

Zero is not a neutral assumption. The optimization engine treats it as fact, shifting budget toward channels with immediate returns and away from brand. Over time the pool of brand-aware consumers shrinks, CAC rises, and the damage compounds in ways hard to trace back to the original measurement decision.

Is adstock a reliable way to measure long-term brand effects in MMM?

Adstock works for short-term carryover over days to a couple of weeks. At longer horizons it requires pre-specifying the magnitude and timing of effects that firm-level data cannot determine, introducing subjectivity that degrades both short-term and long-term measurement quality.

How should brands incorporate long-term effects into marketing mix modeling and optimization?

Replace the zero default with empirically grounded multipliers calibrated to each brand’s industry, lifecycle stage, and advertising type. Apply these to measured short-term effects to compute the net present value of future returns, then place both figures in the optimization objective function so budget recommendations reflect the full expected value across the complete time horizon.